When Expertise and Authority Diverge

In 1986, engineers responsible for the NASA Space Shuttle Challenger advised against launch. The temperature was lower than desired by the engineering team. Testing had demonstrated that O-ring resilience degraded in cold conditions. The engineering teams understood the failure mechanism and had reviewed the data repeatedly. Their recommendation was clear, delay the launch. Despite this warning, senior decision makers chose to proceed.

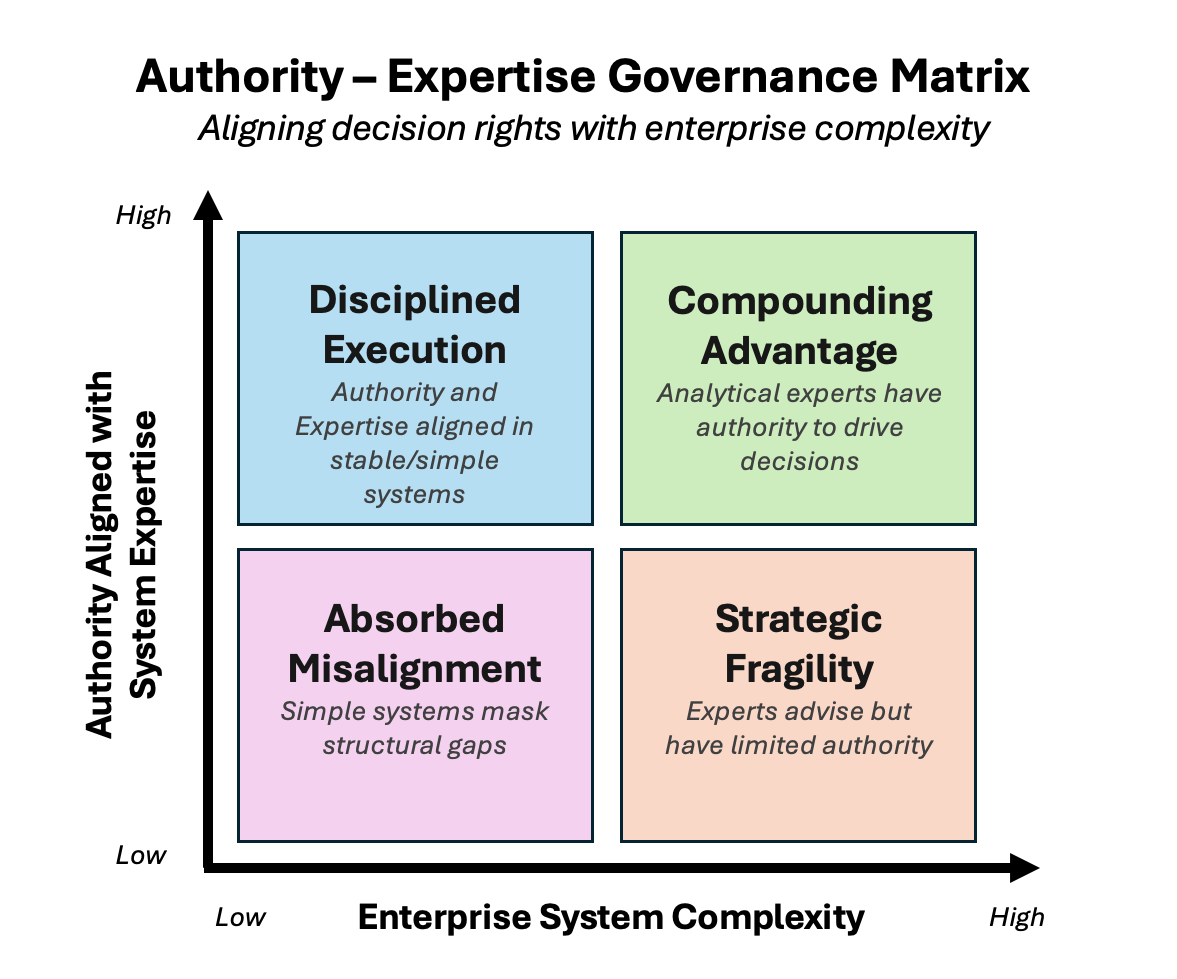

The tragedy that followed was not caused by an absence of data or a lack of technical competence. It resulted from a structural misalignment between those who understood the system most deeply and those who held final authority.

This pattern is not confined to the aerospace industry. It appears wherever complex systems are governed by capable leaders who lack full visibility into the technical mechanisms at the heart of risk and performance. As organizations become increasingly dependent on data and artificial intelligence, a similar misalignment is emerging in how technical leadership is positioned within the enterprise.

Data Is No Longer the Constraint

Most large organizations have invested substantially in analytics capabilities. They operate in cloud data platforms, with machine learning infrastructure, experimentation frameworks, and real time reporting environments. They have statisticians, economists, engineers, and data scientists capable of understanding complex problems. As a result capability is no longer scarce, but interpretation is still a risk.

Enterprise performance now depends on understanding interdependent systems: pricing interacting with loyalty behavior; marketing investment shaping long term customer value; risk models influencing capital allocation; experimentation guiding strategic commitments. These systems are non-linear and adaptive. Signals are probabilistic rather than definitive, and small errors propagate rapidly at scale.

In this environment, data does not speak for itself. It requires disciplined interpretation and structured governance. Mature and sophisticated organizations empower technical leaders to interpret for the business. Yet in many organizations, analytics leadership remains positioned as a technical support function rather than as a steward of enterprise decision architecture. Teams are asked to measure outcomes and validate initiatives but are rarely granted authority over the assumptions embedded upstream. They quantify performance, yet do not consistently shape the frameworks that determine it. The distinction may appear subtle. Its consequences are not.

Organizations that prioritize presentation over precision, risk confusing fluency with understanding. At the executive level, general familiarity with metrics may be sufficient for directional decisions. Competitive advantage, however, is rarely found in generalities. It is found in the nuance’s elasticity thresholds, marginal effects, risk tolerances, and causal mechanisms that only technical leaders are positioned to interpret fully. The less obvious decisions, informed by deeper analytical insight, often differentiate market leaders from their peers.

The Governance Gap

The issue is not whether executives value data. It is how decision authority is structured. When decision rights are separated from technical comprehension, organizations introduce latent risk. Those with the deepest understanding of model assumptions, causal inference, statistical uncertainty, and incremental impact frequently serve as advisors rather than governing actors.

Three risks follow:

Narrative coherence can override empirical constraint. Executives are responsible for clarity of direction and organizational momentum. Analytics leaders are responsible for probabilistic rigor and boundary conditions. Without structural integration, confidence can outpace evidence.

Capital allocation becomes vulnerable to systematic misinterpretation. If incremental lift is overstated, elasticity misread, or attribution misassigned, investment decisions become directionally flawed. Over time, return on invested capital erodes not because capital is insufficient, but because interpretation is incomplete.

Model risk increases exponentially. Algorithmic systems increasingly influence pricing, credit decisions, personalization, and forecasting. Small structural biases scale quickly. Without executive level stewardship of analytical integrity, weaknesses remain obscured until performance deterioration becomes material.

These risks rarely present as immediate failure. They compound gradually. Fractures emerge over planning cycles, capital budgets, and performance reviews often visible only after strategic momentum has shifted.

Authority Should Follow System Understanding

High performing institutions recognize that authority must track technical comprehension. In financial services, quantitative risk oversight is embedded directly into capital governance. In manufacturing, engineering tolerances carry binding authority when safety thresholds are approached. These structures are not symbolic; they exist because complexity demands alignment between expertise and decision rights.

As enterprises become more dependent on advanced analytics and AI, similar alignment becomes necessary. Customer lifetime value models, risk weighted pricing, experimentation design, and loyalty economics are not ancillary analyses. They define the economic engine of the organization.

When analytics leadership lacks structural authority, interpretation becomes subordinate to instinct. That approach may have been tolerable in slower markets. It is increasingly fragile in competitive, data intensive environments where marginal gains determine long term advantage.

From Capability to Stewardship

Boards and CEOs should ask these questions:

Who defines the enterprise decision framework?

Who governs the assumptions embedded in advanced models?

Who sets confidence thresholds for capital deployment?

Who holds authority to recalibrate strategy when empirical evidence contradicts prevailing narratives?

Who oversees model risk as systems scale?

If these responsibilities are diffuse, intelligence is distributed while authority remains centralized.

Embedding analytical stewardship within temporary transformation structures or fragmented reporting lines may appear administratively efficient, but it often creates fracture points in critical decision processes. Governance of complex systems requires continuity, proximity to data, and executive mandate not episodic oversight.

Analytics leadership must be positioned as an enduring enterprise capability, not a transitional function.

Compounding Insight or Compounding Error

The lesson from Challenger was not that data was unavailable. It was that expertise was advisory while authority resided elsewhere. Modern enterprises face a quieter version of the same structural risk. When analytics leadership is empowered to measure but not to shape decision architecture, organizations separate understanding from control. When expertise and authority converge, insight compounds. Strategic decisions become more resilient, capital more precisely allocated, and risk more transparently managed. When they diverge, error compounds. Not always dramatically, but incrementally across initiatives, budgets, forecasts, and competitive positioning.

In complex systems, distance from the signal increases confidence while decreasing accuracy. Governance structures must counterbalance that tendency by aligning authority with those who understand the system's mechanics. Analytics leadership should not be positioned as an operational adjunct. These leaders make dozens of complex, system level judgments daily. Granting them structural authority over enterprise decision frameworks reduces risk and unlocks economic potential.

Organizations that embed analytical interpretation at the core of executive authority build durable advantage. Those that do not eventually discover that intelligence without decision rights is indistinguishable from its absence.